added comment explaining group norm behavior in ggml.h#813

Open

balisujohn wants to merge 1 commit intoggml-org:masterfrom

Open

added comment explaining group norm behavior in ggml.h#813balisujohn wants to merge 1 commit intoggml-org:masterfrom

balisujohn wants to merge 1 commit intoggml-org:masterfrom

Conversation

Member

|

@leejet since you wrote |

Contributor

|

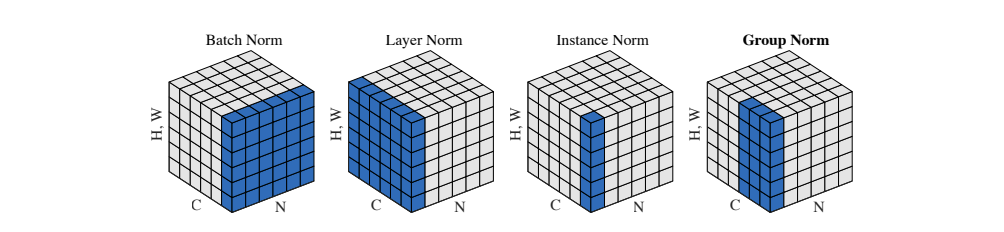

The ggml_group_norm is not calculated over the first 2 dimensions, but rather splits ne2 (which is equivalent to num_channels in PyTorch) into n_groups (equivalent to num_groups in PyTorch) groups and then calculates along each group and dimensions ne0 and ne1. Since most scenarios using group norm involve 3-dimensional or 4-dimensional tensors, I simply assumed the group partitioning occurs along ne2. I've previously commented in the API, |

Contributor

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Add this suggestion to a batch that can be applied as a single commit.This suggestion is invalid because no changes were made to the code.Suggestions cannot be applied while the pull request is closed.Suggestions cannot be applied while viewing a subset of changes.Only one suggestion per line can be applied in a batch.Add this suggestion to a batch that can be applied as a single commit.Applying suggestions on deleted lines is not supported.You must change the existing code in this line in order to create a valid suggestion.Outdated suggestions cannot be applied.This suggestion has been applied or marked resolved.Suggestions cannot be applied from pending reviews.Suggestions cannot be applied on multi-line comments.Suggestions cannot be applied while the pull request is queued to merge.Suggestion cannot be applied right now. Please check back later.

Adds a comment explaining behavior described in this issue: #803