Releases: skypilot-org/skypilot

SkyPilot v0.12.0

SkyPilot v0.12.0: Slurm Support, Job Groups for RL, Agent Skill, Recipes, Pool Autoscaling for Batch Inference, 7x Data Mounting, and More

SkyPilot v0.12.0 brings major new capabilities: Slurm integration for running SkyPilot on existing Slurm clusters, Job Groups for heterogeneous parallel workloads like RL training, an Agent Skill that teaches AI coding agents to use SkyPilot, Recipes for sharing reusable YAML templates across teams, and significant Pool enhancements including autoscaling. This release also drops Python 3.7/3.8, with Python 3.9+ now required.

Get it now with:

uv pip install "skypilot[all]>=0.12.0"Or, upgrade your team SkyPilot API server:

NAMESPACE=skypilot

RELEASE_NAME=skypilot

VERSION=0.12.0

helm repo update skypilot

helm upgrade -n $NAMESPACE $RELEASE_NAME skypilot/skypilot \

--set apiService.image=berkeleyskypilot/skypilot:$VERSION \

--version $VERSION --devel --reuse-valuesDeprecations & Breaking Changes:

- Dropped Python 3.7 and 3.8 support—Python 3.9+ is now required (#8489).

sky.jobs.queue(version=1)is deprecated and will be removed in v0.13. Usesky.jobs.queue(version=2)instead. The new version returns richer job metadata as dictionaries (#9118).

Highlights

[New] Slurm Support

SkyPilot now supports connecting your Slurm clusters, bringing its unified interface to one of the most widely used job schedulers in high-performance computing (#5491, #8198, #8219, #8268, #8291, #8470, #8604, #8729, and 25+ additional PRs). Users can launch SkyPilot clusters and managed jobs on Slurm clusters with the same CLI and YAML they use for cloud and Kubernetes, enabling seamless workload portability across all AI infra.

Key capabilities include:

- Multi-node clusters with partition-level resource management

- Container support via pyxis/enroot for reproducible environments

- SSH ProxyJump for clusters behind bastion hosts

- GPU availability viewing with

sky show-gpusfor Slurm partitions - Custom sbatch directives via

sbatch_optionsin task YAML - Configurable workdir and tmpdir for shared filesystem environments

- Admin policy support for Slurm partition routing

# Launch on a Slurm cluster

resources:

accelerators: H100:8

infra: slurm# View GPU availability across Slurm clusters

sky show-gpus --infra slurm

# Launch a training job on Slurm

sky launch --infra slurm/my-cluster train.yaml[New] Agent Skill: AI Agents Meet SkyPilot

SkyPilot now ships an official Agent Skill that teaches AI coding agents—Claude Code, Codex, and others—how to use SkyPilot (#8823, #9017, #9037). With the skill installed, your agent can launch clusters, run managed jobs, serve models, compare GPU pricing, and manage cloud resources—all through natural language.

Install the skill (docs) by telling your agent:

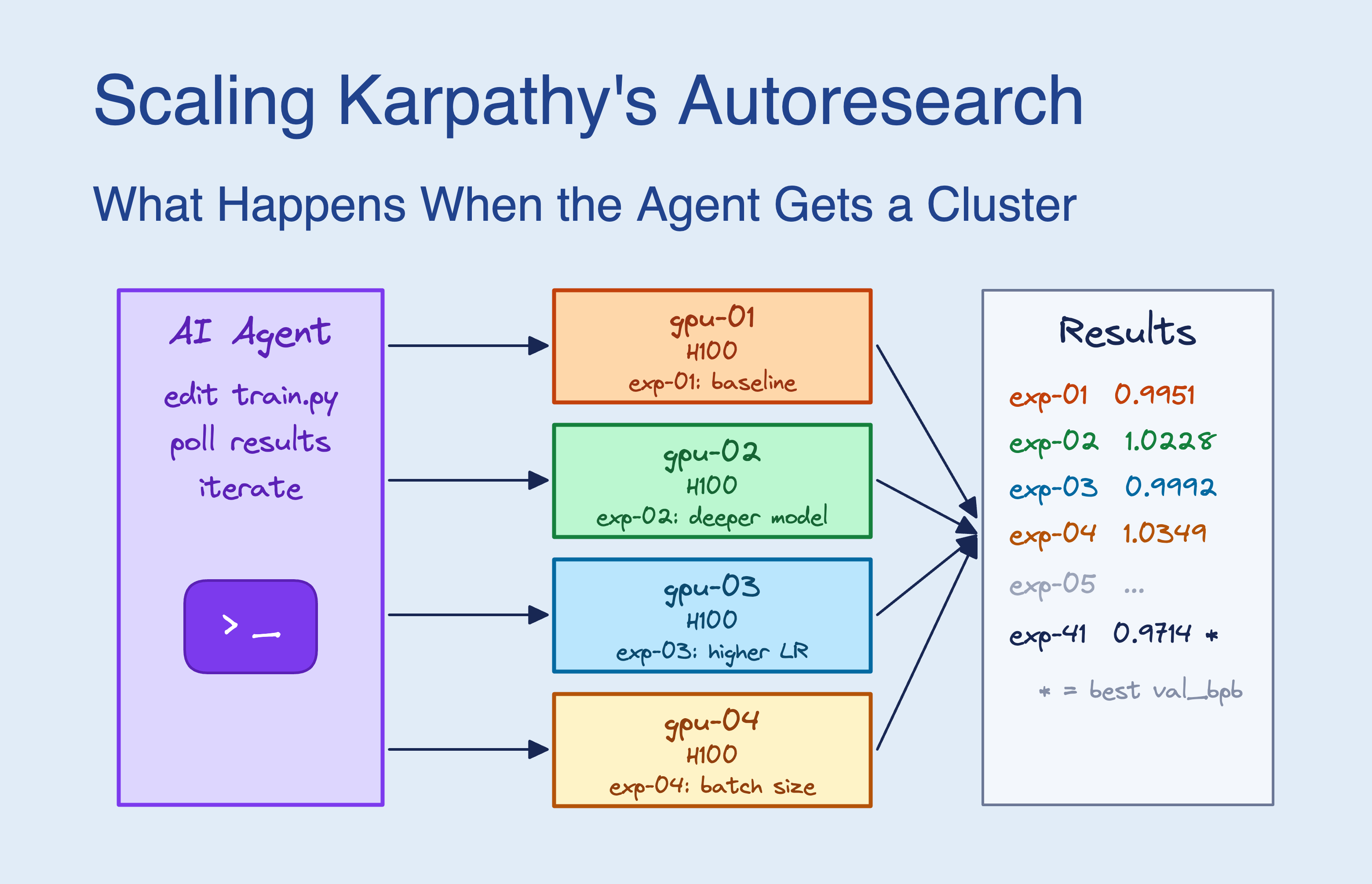

Fetch and follow the install guide https://github.com/skypilot-org/skypilot/blob/HEAD/agent/INSTALL.mdIn our Scaling Autoresearch blog post, we gave Claude Code the SkyPilot agent skill and access to a 16-GPU Kubernetes cluster. Over 8 hours, the agent autonomously submitted ~910 experiments in parallel, achieving a 9x speedup over sequential search—and even discovered hardware-specific optimizations on its own.

Example interactions your agent can now handle:

| Capability | Example Prompt |

|---|---|

| Launch dev clusters | "Launch a cluster with 4 A100 GPUs. Auto-stop after 30 min idle." |

| Fine-tune models | "Fine-tune Llama 3.1 8B on my dataset at s3://my-data. Use spot instances." |

| Distributed training | "Run PyTorch DDP training across 4 nodes with 8 H100s each." |

| Serve models | "Deploy Llama 3.1 70B with vLLM. Autoscale 1-3 replicas based on QPS." |

| Compare pricing | "What's the cheapest 8x H200 across AWS, GCP, Lambda, and CoreWeave?" |

| Multi-cloud failover | "Submit jobs that try our Slurm cluster first and fall back to AWS." |

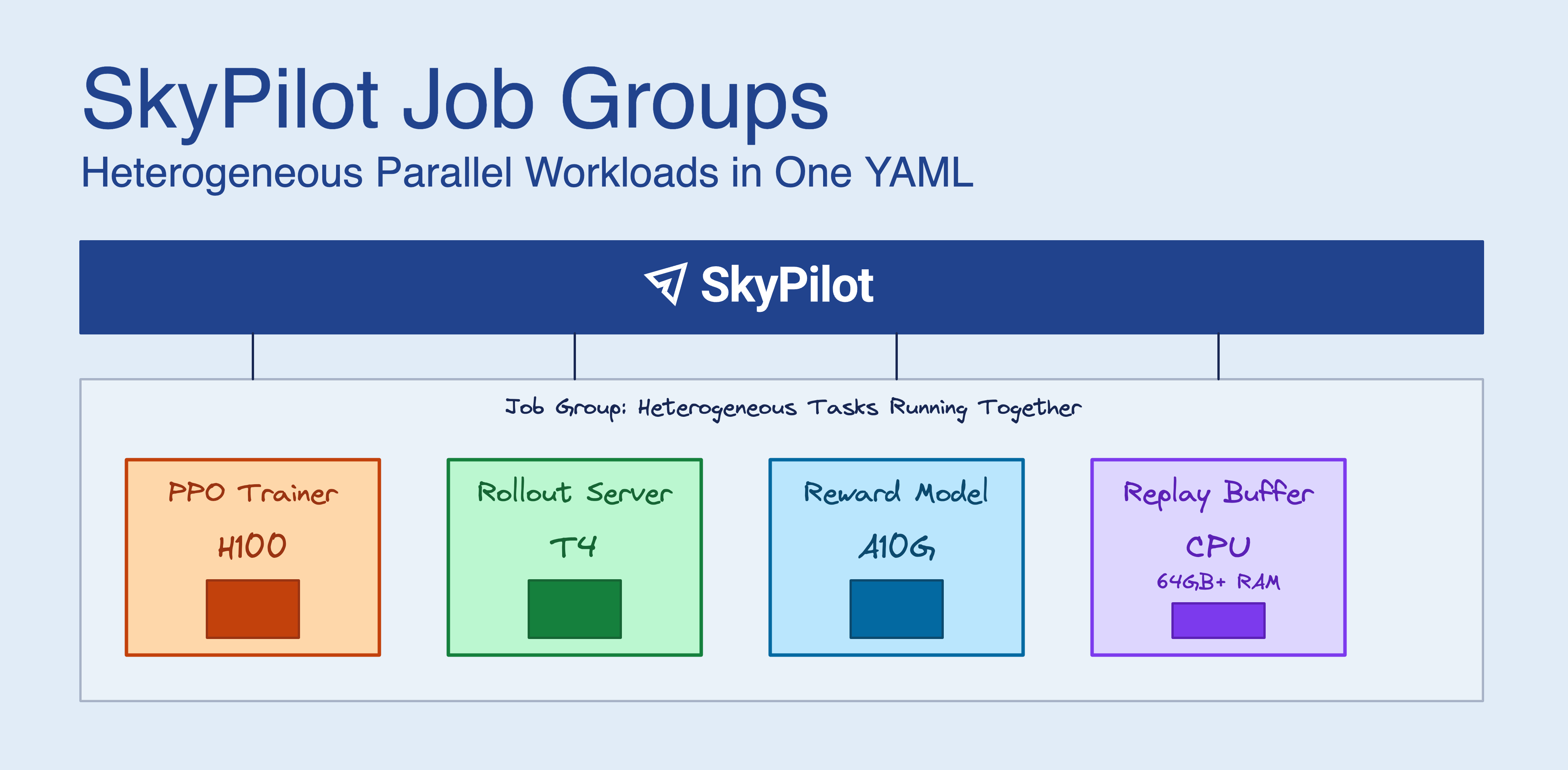

[New] Job Groups: Heterogeneous Parallel Workloads

SkyPilot Job Groups let you define multiple tasks with different resource requirements that run together as a single managed job (#8456, #8664, #8686, #8688, #8713, #8940). This is ideal for reinforcement learning workflows where training, inference, and data servers need different hardware—H100s for policy training, cheaper GPUs for rollout inference, and high-memory CPUs for replay buffers.

SkyPilot provisions all resources together, configures networking automatically with built-in service discovery ({task_name}-{node_index}.{job_group_name}), and manages the full lifecycle as one unit.

# Multi-document YAML for a Job Group

---

name: rl-training

execution: parallel

primary_tasks: [ppo-trainer]

---

name: data-server

resources:

cpus: 4+

run: |

python data_server.py --port 8000

---

name: ppo-trainer

resources:

accelerators: H100:1

run: |

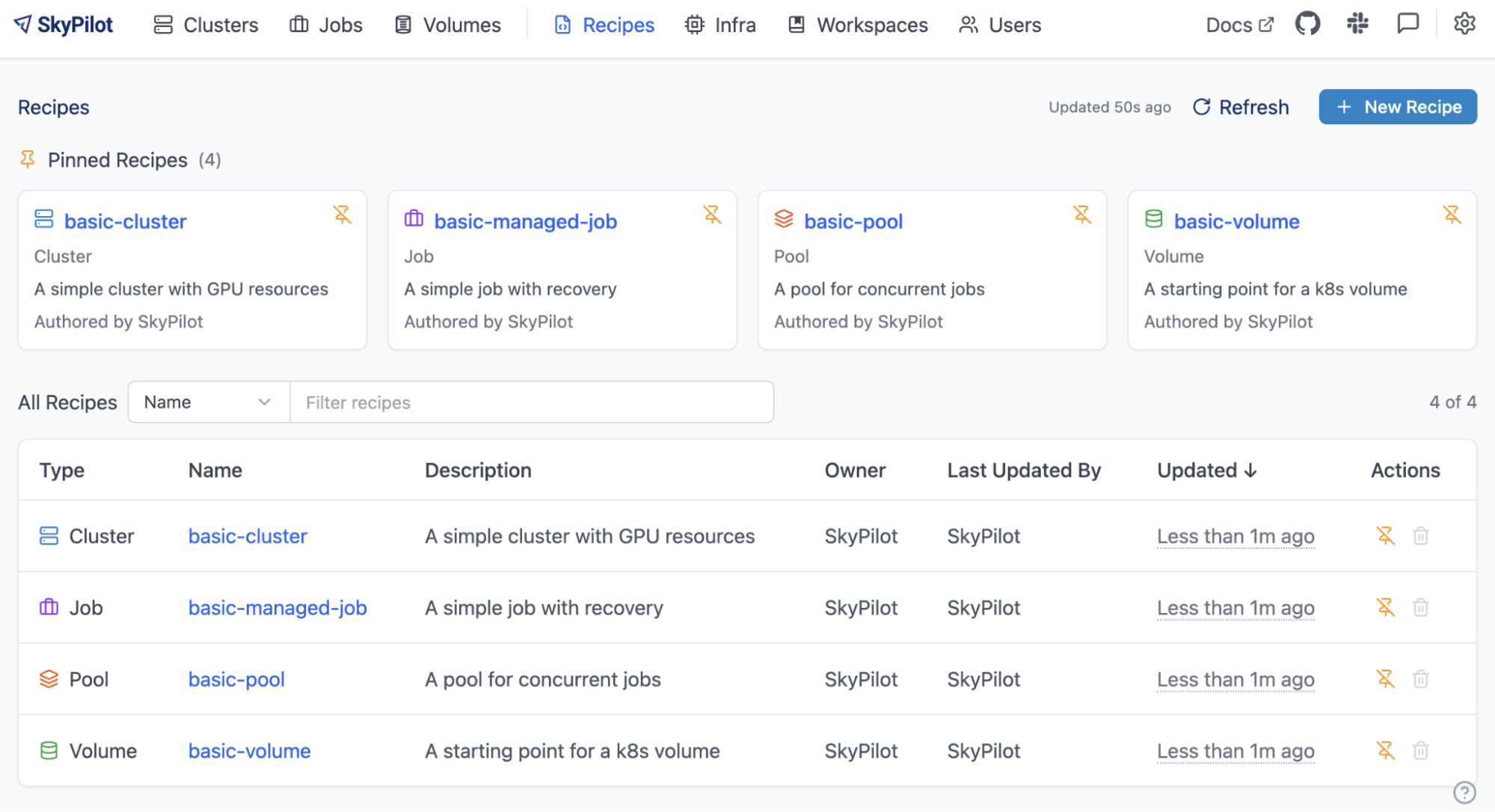

python ppo_trainer.py --data-server data-server-0.rl-training:8000[New] Recipes: Shared YAML Registry

Recipes allow teams to store and share SkyPilot YAMLs in a centralized, team-accessible registry (#8755, #8825, #8851, #8873, #8876, #8882). Launch standardized workloads directly from the CLI or dashboard without local YAML files, reducing DevOps overhead and ensuring consistent configurations across the team.

Recipes support clusters, managed jobs, pools, and SkyServe, with built-in validation that blocks local file dependencies to ensure portability.

# Launch a recipe directly

sky launch recipes:dev-cluster

# Launch with custom overrides

sky launch recipes:gpu-cluster --cpus 16 --gpus H100:4 --env DATA_PATH=s3://my-dataPool Autoscaling & Enhancements

SkyPilot Pools receive major upgrades with autoscaling, multiple jobs per worker, heterogeneous pools, and memory-aware scheduling (#8483, #8192, #8315, #8279, #8509, #7891).

- Autoscaling (#8483): Pools now automatically scale workers up and down (including to zero) based on queue length, maximizing GPU utilization while minimizing cost.

- Multiple jobs per worker (#8192): Workers can now run multiple concurrent jobs, improving resource utilization for smaller workloads.

- Heterogeneous pools (#8315): Pools can now contain workers with different resource configurations.

- Memory-aware scheduling (#8279): The pool scheduler now considers memory requirements when assigning jobs to workers.

- Fractional GPU improvements (#8509, #8480): Fixed fractional GPU support across multiple workers and corrected dashboard display.

# autoscaling-pool.yaml

pool:

min_workers: 0

max_workers: 10

resources:

accelerators: H100

setup: |

echo "Setup complete!"Dashboa...

SkyPilot v0.11.2

SkyPilot v0.11.2: Slurm Support, JobGroups, Enhanced Pools, External Links, Autostop Hooks, 7x data mount speed up and More

SkyPilot v0.11.2 delivers Slurm support in Beta, JobGroups for heterogeneous parallel workloads, and significantly enhanced Pools with autoscaling, multi-job scheduling and heterogeneous GPU support. This release also brings Autostop Hooks, 7x MOUNT_CACHED mode uploads speed up, automatic EFA on EKS, and numerous admin, security, and performance improvements.

Get it now with:

uv pip install "skypilot>=0.11.2"Or, upgrade your team SkyPilot API server:

NAMESPACE=skypilot

RELEASE_NAME=skypilot

VERSION=0.11.2

helm repo update skypilot

helm upgrade -n $NAMESPACE $RELEASE_NAME skypilot/skypilot \

--set apiService.image=berkeleyskypilot/skypilot:$VERSION \

--version $VERSION --devel --reuse-valuesBreaking Change: Python 3.9+ required — Python 3.7 and 3.8 are no longer supported (#8489). Please upgrade before installing this release.

Highlights

[Beta] Slurm Support

SkyPilot now supports Slurm as a new infrastructure backend, enabling users to orchestrate workloads on HPC clusters alongside cloud VMs and Kubernetes — all through the same unified interface (docs, #5491, #8138).

This release brings comprehensive Slurm capabilities:

- Multi-node distributed workloads — run distributed training jobs across multiple Slurm nodes with proper environment variable propagation and per-node logging (#8219)

- Containerized execution via NVIDIA pyxis/enroot — specify Docker images with

--image-idfor reproducible, GPU-accelerated workloads (#8604, #8609) - Resource-scoped SSH sessions —

ssh <cluster>drops you inside the Slurm job allocation, sonvidia-smicorrectly reflects only your allocated GPUs (#8268) - Interactive SSH authentication (2FA, password prompts) for clusters requiring keyboard-interactive auth (#8317)

- Partition support — Slurm partitions are mapped to SkyPilot zones, enabling partition-aware scheduling (#8198)

- Multi-cluster support — configure and use multiple Slurm clusters simultaneously

- Dashboard integration — Slurm clusters appear in the infrastructure page with status and GPU utilization

See the Slurm documentation for setup instructions.

JobGroups: Heterogeneous Parallel Workloads

JobGroups enable running multiple jobs with different resource requirements together as a managed group (blog, #8456). Define multi-task pipelines in a single multi-document YAML file:

# Header: job group metadata

name: rl-training

execution: parallel

primary_tasks: [trainer]

termination_delay: 30s

---

name: trainer

resources:

accelerators: A100:8

run: python train.py

---

name: reward-server

resources:

accelerators: A100:1

run: python reward_server.pyKey capabilities:

- Parallel cluster launch and monitoring — all jobs launched and tracked concurrently

- Inter-job networking — easy task name based hostname discovery (.) for job-to-job communication

- Dashboard UI — expandable rows for multi-task groups, task-specific log filtering

- CLI support —

sky jobs logs --task-name <name>for viewing specific task logs

Example applications included: RL post-training (RLHF) pipeline and parallel train-eval pipeline.

Enhanced Pools: Multi-Job Scheduling and Heterogeneous GPUs

SkyPilot Pools receive significant upgrades in this release:

- Multiple jobs per worker — the scheduler now performs resource-aware bin-packing, tracking CPU, memory, and accelerator usage to fit multiple jobs on a single worker (#8192, #8279)

- Autoscaling — pools can now automatically scale workers up and down (including to zero) based on queue length. Specify

min_workers,max_workers, and target queue length; aQueueLengthAutoscalerhandles the rest while protecting running jobs from cancellation (#8483) - Heterogeneous GPU support — specify

any_ofresource configurations and the scheduler dynamically resolves to available hardware (#8315):

resources:

any_of:

- accelerators: T4:1

- accelerators: A100:1- 9x faster concurrent job launch — launching 100 concurrent jobs reduced from 4.5 minutes to 30 seconds using the new

--num-jobsargument (#7891) - Fractional GPU scheduling fix — fractional GPU jobs now correctly schedule across all workers (#8509)

External Links

SkyPilot dashboard now automatically detects your W&B links generated by your AI workloads. No need to dig into the job logs to figure out where your training panels are. (#8405)

Autostop Hooks

An autostop hook mechanism allow running custom scripts before a cluster is automatically stopped — for example, to save checkpoints, sync W&B runs, or send Slack notifications (#8412):

resources:

autostop:

idle_minutes:10

hook:|

wandb sync

curl -X POST $SLACK_WEBHOOK -d '{"text": "Cluster shutting down"}'

hook_timeout:300- New

sky logs --autostopcommand to view hook execution logs sky execis rejected onAUTOSTOPPINGclusters;sky launchwaits for autostop to complete before restarting

Automatic EFA Setup on Amazon EKS

SkyPilot now automatically configures Elastic Fabric Adapter (EFA) on EKS with a single flag (#8557):

resources:

network_tier: bestThis automates what was previously a complex manual setup, delivering ~78.8 GB/s inter-node bandwidth (vs ~4.1 GB/s without EFA), critical for distributed training performance. EFA interfaces are allocated proportionally to the requested GPU count.

7x MOUNT_CACHED Uploads Speed Up

Parallel uploads are now the default for MOUNT_CACHED file mounts, delivering a 7x speedup — flush time dropped from 151s to 21s for a ~14.6 GB test workload (#8455). A new data.mount_cached.sequential_upload config option allows reverting to sequential uploads if needed.

Exit Code-Based Job Recovery

Users can now specify exit codes that trigger automatic job recovery in managed jobs (#8324):

resources:

job_recovery:

recover_on_exit_codes:[29]When a job exits with a specified code, SkyPilot automatically recovers it — useful for transient failures with known error codes.

Windows WSL Support

Automatically detect that SkyPilot is running in WSL, and seamless set up VSCode Remote-SSH for Windows users (#8669)

Admin Deployment Improvement

- External authentication proxy support — deploy behind AWS ALB with Cognito, Azure Front Door, or custom SSO proxies; supports both plaintext and JWT header formats (#8751)

- Sidecar container support — Istio, Datadog, and other sidecar injection no longer breaks K8s provisioning; SkyPilot explicitly targets the

ray-nodecontainer (#8353, #8444)

What’s New

Kubernetes

- Improved Kueue integration — 24-hour provisioning timeout, workspace-level queue configuration, controller pod exclusion, and pod annotations (#8484)

- GPU detection fix — L40S no longer misidentified as L4; added Blackwell, newer Hopper, and Ada Lovelace GPUs (#8593)

kubernetes.set_pod_resource_limits— set pod CPU/memory limits relative to requests for pod resource limit enforcement ([#8644](https://github.com/skypilot-org/skypilot/pul...

Release v0.11.2rc1

This patch release is a minor bump from v0.11.1 to to get you the latest fixes:

- Always set SSH key permission to avoid issue when jobs controller restarts by high availability setting #8316

Install the release candidate:

# Select needed clouds.

uv pip install 'skypilot[kubernetes,aws,gcp]==0.11.2rc1'

Upgrade your remote API server:

NAMESPACE=skypilot # TODO: change to your installed namespace

RELEASE_NAME=skypilot # TODO: change to your installed release name

helm repo update skypilot

helm upgrade -n $NAMESPACE $RELEASE_NAME skypilot/skypilot \

--version 0.11.2-rc.1 \

--reset-then-reuse-values \

--set apiService.image=null # reset to default image

Full Changelog: v0.11.1...v0.11.2rc1

SkyPilot v0.11.1

This patch release is a minor bump from v0.11.0 to to get you the latest fixes:

- #8285: Fix an issue where the API server request database is incorrectly locked causing an irresponsive API server with an error:

sqlite3.OperationalError: database is locked

See the full v0.11 release notes for everything new in SkyPilot v0.11!

SkyPilot v0.11.0

SkyPilot v0.11.0: Multi-Cloud Pools, Fast Managed Jobs, Enterprise-Readiness at Large Scale, Programmability

SkyPilot v0.11.0 delivers major new features: Pools and Managed Jobs Consolidation Mode; significant improvement enterprise-readiness at large scale: supports hundreds of AI engineers with a single API server instance, avoid OOM, >10x performance improvement on many requests, additional observability, and more; and UX improvements: Templates, Python SDK, CI/CD, Git Support, and more.

Get it now with:

uv pip install "skypilot>=0.11.0"Or, upgrade your team SkyPilot API server:

NAMESPACE=skypilot

RELEASE_NAME=skypilot

VERSION=0.11.0

helm repo update skypilot

helm upgrade -n $NAMESPACE $RELEASE_NAME skypilot/skypilot \

--set apiService.image=berkeleyskypilot/skypilot:$VERSION \

--version $VERSION --devel --reuse-valuesHighlights

[Beta] SkyPilot Pools: Batch inference across clouds & k8s

SkyPilot supports spawning a pool that launches a set of workers across many clouds and Kubernetes clusters (docs, #6260, #6426, #6459, #6552, #6591, #6665, #6675, #7332, #7963, #8008, #7876, #8047, #7930,#8039, #7846, #7855, #7919, #7920). Jobs can be scheduled on this pool and distributed to workers as they become available.

Key benefits include:

- Fully utilize your GPU capacity across clouds & k8s

- Unified queue for jobs on all infra

- Keep workers warm, scale elastically

- Step aside for other higher priority jobs; reschedule when GPUs become available

Learn more in our blog post.

[GA] Managed Jobs Consolidation mode

Consolidation Mode is general available (#7122, #7127, #7396, #7459, #7498, #7560, #7601, #7619, #7717, #7720, #7847, #8082, #8021, #8106). This enables:

- 6x faster job submission

- consistent credentials across the API server and jobs controller.

- Persistent managed jobs state on postgres

# config.yaml

jobs:

controller:

consolidation_mode: true # Currently defaults to False.

Efficient and Robust Managed Jobs

We have significantly optimized the Managed Jobs controller, allowing it to handle 2000+ parallel jobs on a single 8-CPU controller—an 18x improvement in job capacity with the same controller size (#7051, #7371, #7379, #7408, #7432, #7473, #7487, #7488, #7494, #7519, #7585, #7595, #7945, #7966, #7979,#8036, #8095).

Enterprise-Ready SkyPilot at Large-scale

- Support hundreds of AI engineers with a single SkyPilot API server instance

- Significant reduction in memory consumption and avoid OOM for API server

- CLI/SDK/Dashboard speed up with a large amount of clusters, jobs

- Comprehensive API server metrics for operation

- SSO support with Microsoft Entra ID

Kubernetes

UX, Robustness, Performance Improvement

- Robust SSH for SkyPilot cluster on Kubernetes

- Robust multi-node(pod) provisioning with retry and recovery (#7820, #7854, #7852)

- Improve resource cleanup after termination

- Intelligent GPU name detection

- Improved volume support: label support, name validation, SDK support.

Volume Support for Existing PVC and Ephemeral Volumes (#7915, #7971,#8179)

-

Exising PVC: Reference pre-existing Kubernetes PersistentVolumeClaims as a SkyPilot volume (#7915).

# volume.yaml name: existing-pvc-name type: k8s-pvc infra: k8s/context1 use_existing: true config: namespace: namespace

-

Ephemeral Volumes: automatically create volumes when a cluster is launched and deleted when the cluster is torn down, making them ideal for temporary storage across multiple nodes, such as caches and intermediate results.

# task.sky.yaml file_mounts: /mnt/cache: size: 100Gi

Cloud Support

CoreWeave Integration Announcement

CoreWeave now officially support SkyPilot (#6386, #6519, #6895, #7756, #7838). This integration provides: Infiniband Support, Object Storage, Autoscaling.

See the announcement on Coreweave blog.

AMD GPU Support Announcement

SkyPilot now fully supports AMD GPUs on Kubernetes clusters (#6378, #6944). This includes: GPU Detection and Scheduling, Dashboard Metrics, ROCm Support.

See the announcement on AMD Rocm blog.

More Clouds Support

- Together AI Instant cluster

- Seeweb support

User Experience

SkyPilot Templates

SkyPilot now ships predefined YAML templates for launching clusters with popular frameworks and patterns. Templates are automatically available on all new SkyPilot clusters. (#7935, #7965)

You can now launch a multi-node Ray cluster by adding a single line to your YAML’s run block:

run: |

# One-line setup for a distributed Ray cluster

~/.sky/templates/ray/start_cluster

# Submit your job

python train.pyProgrammability: SkyPilot Python SDK

SkyPilot Python SDK is significantly improved with:

- Type hints

- Log streaming

logs = sky.tail_logs(cluster_name, job_id, follow=True, preload_content=False)

for line in logs:

if line is not None:

if 'needle in the haystack' in line:

print("found it!")

break

logs.close()- Admin policy: build admin policy with helper functions

resource_config = user_request.task.get_resource_config()

resource_config['use_spot'] = True

user_request.task.set_resources(re...SkyPilot v0.11.0rc1

This is a preview of the upcoming 0.11.0 release!

Install the release candidate:

# Select needed clouds.

uv pip install 'skypilot[kubernetes,aws,gcp]==0.11.0rc1'Upgrade your remote API server:

NAMESPACE=skypilot # TODO: change to your installed namespace

RELEASE_NAME=skypilot # TODO: change to your installed release name

helm repo update skypilot

helm upgrade -n $NAMESPACE $RELEASE_NAME skypilot/skypilot \

--version 0.11.0-rc.1 \

--reset-then-reuse-values \

--set apiService.image=null # reset to default imageIf you try out 0.11.0rc1, please let us know in Slack!

SkyPilot v0.10.5

SkyPilot v0.10.5: Major Managed Jobs Efficiency Improvement, UX and SDK Usability Enhencement, and API Server Robustness Fixes

This release focuses on production stability, performance optimization, and fixing critical bugs that affected reliability in multi-user and Kubernetes environments. Key highlights include major performance improvements for managed jobs and the dashboard, resolution of race conditions and resource leaks, and expanded cloud/accelerator support.

This release includes 400+ merged pull requests with high-priority critical fixes and additional improvements spanning bug fixes, performance enhancements, new features, and comprehensive documentation updates.

Highlights

Managed Job Efficiency and Robustness Improvement

- 18x more jobs with the same jobs controller size – 2000+ parallel jobs on an 8-CPU controller (#7051, #7371, #7379, #7408, #7432, #7473, #7488, #7487, #7494, #7519, #7585, #7595).

- [Beta] Avoid separate job controller with the new

consolidation_mode(#7127, #7396, #7459, #7122, #7498, #7601, #7560, #7619, #7717, #7720).- Consistent credentials across API server and jobs controller

- 6x faster job submission,

sky jobs launch

# config.yaml

jobs:

controller:

consolidation_mode: true # Currently defaults to False.Performance and Robustness Improvement at a Large Scale

- 20x cluster status speedup at large scale (#7215, #7220, #7224, #7246, #7389, #7453, #7282, #7261, #7076, #7676).

- 6x jobs query and dashboard speedup (#7463, #7458, #7780, #7798, #7453).

API Server Robustness Improvement

- Significantly reduce memory consumption and avoid OOM for API server (#7240).

Python SDK Usability Improvements

- New Python SDK examples to easily scale out your jobs on any infra (#7335).

import sky

from sky import jobs as managed_jobs

for i in range(100):

resource = sky.Resources(accelerators='A100:8')

task = sky.Task(resources=resource,

workdir='.',

run='python batch_inference.py')

managed_jobs.launch(task, name=f'hello-{i}')- Admin policy robustness and better usability (#7827).

Integration

- CoreWeave managed Kubernetes and storage support (#7756, #6519, #7759).

- AMD GPU support and GPU metrics (#6944).

What's New

Additional Performance Improvements

- Migrated managed jobs and SkyServe to a gRPC architecture, reducing P95 latency by up to 86% and CPU usage by 20-30% (#7245, #6647, #6702, #7184).

- Optimized Kubernetes pod processing with streaming JSON, providing 2x speedup and 50% memory reduction for large clusters (#7469).

- Optimized database queries for

sky statusand API endpoints, improving mean response time by 10-35% (#7689, #7690, #7665, #7705, #7708). - Replaced standard JSON with high-performance

orjsonfor API serialization, improving tail latency for large payloads (#7734).

Critical Stability & Security Fixes

- Fixed critical race conditions in Docker operations ("container name already in use") (#7030), API server start/stop (#7534), and asynchronous job cancellation, preventing cluster leaks (#7511).

- Resolved critical Kubernetes resource leaks, including semaphore file leaks (

/dev/shmexhaustion) (#7678) and fusermount-server leaks (#7398). - Fixed system hangs caused by SSH threads (#7202), K8s SSH proxy blocking (#7537), and

BrokenProcessPoolcrashes when cancelingsky logs(#7607). - Addressed a critical OOM bug in the server caused by inefficient GPU name canonicalization in Kubernetes (#7080).

- Fixed security/privacy bugs where pending jobs were visible across users (#7581) and environment variables leaked between managed jobs (#7459).

- Prevents accidental cancellation of wrong requests by requiring exact ID matches when prefixes are ambiguous (#7730).

- Replaced insecure

curl | shpattern for fluent-bit installation with official package repositories (#7126).

UX Improvement

- Improved CLI error messages for Kubernetes resource constraints (#6814) and multi-node setup failures (#7001).

- Enhanced

sky downoutput to show a clear summary when multiple clusters fail or succeed (#7225, #7635). - Added a CLI spinner for short-running requests (like

sky status) to show activity when the server is under load ([#76...

SkyPilot v0.10.3.post2

This patch release is a minor bump over v0.10.3.post1 to fix robustness against Coreweave clusters, i.e., adding additional retries and fallback for the Kubernetes API calls:

- Fallback to file-based command execution on 431, 400 #7536 #7563

- Retry on transiant authentication issue with error code 403 #7568 #7574

To upgrade:

pip install "skypilot==0.10.3.post2"

# Restart your local API server

sky api stop; sky api start

Or, upgrade the API server:

NAMESPACE=skypilot

RELEASE_NAME=skypilot

VERSION=0.10.3

helm repo update skypilot

helm upgrade -n $NAMESPACE $RELEASE_NAME skypilot/skypilot \

--set apiService.image=berkeleyskypilot/skypilot:0.10.3.post2 \

--version $VERSION --devel --reuse-values

Full Changelog: v0.10.3.post1...v0.10.3.post2

SkyPilot v0.10.3.post1

This patch release is a minor bump over v0.10.3 to fix a dependency issue caused by a breaking change in uvicorn==0.36.0:

- Pin

uvicorndependency to mitigateAttributeError: 'Config' object has no attribute 'setup_event_loop'error. (#7287)

If you see an issue like above, upgrade your SkyPilot with:

pip install "skypilot==0.10.3.post1"

# Restart your local API server

sky api stop; sky api startIf you are using a remote API server, it should still work with v0.10.3.

SkyPilot v0.10.3

SkyPilot v0.10.3: 2-10x Performance Improvement, Better Observability, Production-Ready Authentication and More

SkyPilot v0.10.3 delivers 2-10x performance improvement, enhanced cloud integration, improved Kubernetes integration, and strengthened production reliability features for AI/ML workloads across clouds.

Highlights

Massive Performance Improvements: 2-10x more efficient

- 4x faster

sky status(#6883, #6858, #6868, #6871, #6882, #6940, #6948, #6892, #6908) - 2-10x speedup for dashboard pages, including users, infra, volumes (#7031, #7033, #6959, #7013, #7011, #7012)

- 2x speedup for remote PostgreSQL environments through connection pooling (#6998, #6897)

- Fixes request responsiveness and SSH lagging/disconnection issue in production (#6984, #6983, , #6889, #6963, #6947, #7015, #6966, #6938, #6957)

- Improved API server memory management (#7046, #7037, #6909)

Upgrade your SkyPilot API server to 0.10.3 and get the improvement out of the box!

Observability & Monitoring

More SkyPilot API server and cluster metrics can now be set up for better observability, including:

- Detailed cluster events (#6907, #6906, #6901, #6899, #6900, #6936, #6875)

- API server detailed metrics for request latency, event loop, and process resource usage (#7017, #6935, #6942, #6968, #7062)

- Request ID tracking for better debugging (#6933)

CoreWeave Integration: Autoscaler

CoreWeave autoscaler is now supported, with a single config field change (#6895):

kubernetes:

autoscaler: coreweaveSkyPilot API Server: Authentication & Production Environment

SkyPilot now supports Microsoft Entra ID SSO login (#7045, #7028), besides Okta and Google Workspace. See more docs for setting up SSO login for API server: https://docs.skypilot.co/en/latest/reference/auth.html#sso-recommended

What's New

UX Improvement

- Fix CLI auto-completion authentication issue (#6724)

- Fixed cluster ownership display (#6989)

- Fixed API logs for daemon request (#6841)

- SDK improvements for

tail_logs(#6902) - Responsive spinner for request blocking (#6905)

- Fixed SDK authentication for download endpoints (#6955)

- Enable log downloading in dashboard (#6999, #7000, #7003, #7010)

- Prevented clients from setting DB strings server-side (#7042)

- Improved logout error handling (#6874)

Storage & File Operations

- Fixed storage mounting on ARM64 instances (#7008)

- Improved chunk upload reliability (#6854)

- Rsync permission fixes for Kubernetes (#6951)

Enhanced Cloud Platform Support

Kubernetes Improvements

- Pod configuration change detection with warnings (#6912)

- Volume name validation (#6863)

- Worker service cleanup on termination (#7014)

- AMD GPU support improvements

Nebius Cloud

- Static IP configuration support (#7002)

- Docker image ID fixes (#6894)

- Pagination support for

list_instances(#6867) - Customizable API domain (#6888)

- Better network error messages (#6971)

- Various stability improvements (#6856, #6896)

AWS

- Elastic Fabric Adapter (EFA) automatic enablement for improved network performance (#6852)

- AWS Systems Manager (SSM) support with new

use_ssmflag for secure connections (#6830) - Improved VPC error messages and handling (#6746)

- Fixed CloudWatch region setting issues (#6747)

- Better teardown leak prevention (#7022)

RunPod

- Volume support for RunPod network volumes (#6949)

GPU Support

- Added B200 to common accelerators in

sky show-gpus(#7006)

Documentation & Examples

- New training examples: TorchTitan (#6677), distributed RL with game servers (#6988)

- Best practices for network and storage benchmarking (#6632)

- Clarified Ray runtime usage (#6783)

- SSM documentation improvements (#7050)

- Fixed broken documentation links (#7026, #7004)

Testing & CI/CD & Development

- Backward compatibility tests against stable releases (#6979)

- SSH lag unit tests (#6968)

- Improved smoke test reliability (#6913, #6958, #6967, #7019)

- Customizable Buildkite queue support (#7063)

- Fixed type errors in managed jobs (#6994)

Developer Experience

- Python 3.12 and 3.13 support (#5304, #6990)

- Performance measurement annotations (#6943)

- Better error handling for UV-only environments (#6893)